← Go back

Sort and search arrays in C / C++ - PART 2

Posted on April 16, 2023 at 18:51 (GMT +00:00) by Colin

Continuing from Sort and search arrays in C / C++ - part 1, if you haven't already read it, I suggest you do that first.

In this article I will start to look at methods of partitioning arrays to increase the speed of sorting the values ascending. The idea of partitioning is basically to split the sorting of the array into "partitions".

First I will start by creating a new sort procedure and name it array_dacsort; literally meaning divide and conquer sort.

The procedure is fairly simple, especially as it is recursively calling itself. Aftering writing this procedure and doing some research on my implementation, it turns out this algorithm is known as quicksort. I used the code from the previous article to test the speed with the exact same conditions as the previous sort procedure and this one came in consecutively as 0.006s - a HUGE difference.

After reading about quicksort and looking at similar implementations, I read more about using an algorithm known as HeapSort which is considered better when using in embedded systems where memory usage may be limited.

After reading a few articles on heap sort and what it actually does, they all seemed to implement a variation of a re-arrangement loop, often called "heapify", so first was to write the code for this:

To understand this, you need ideally to understand binary trees and read into heap operations. Here we consider the array as a binary tree. The left child in a binary tree is always i*2+1, where i is the index of the parent.

Now we need to start building and sorting the heap. We start from half way down the array and decrement, then call our heapify procedure at the current step index to re-arrange the values.

Next we will actually sort the full re-arranged array using the same heapify procedure, again from upper to lower.

The final implementation of our heap sort with some slight changes looks like this:

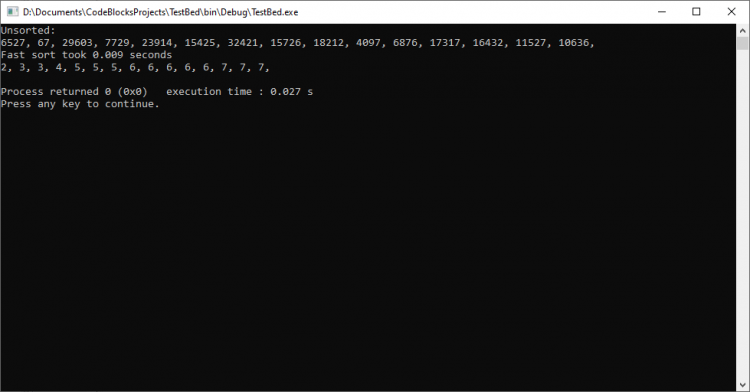

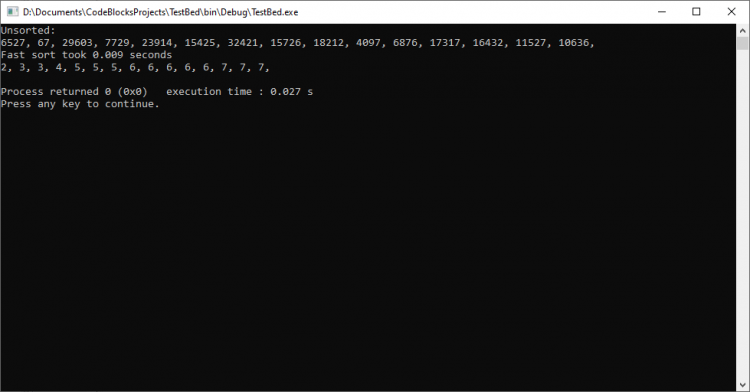

This one took a little longer for me to wrap my head around, but so far it works! Now for speed testing with the same setup as earlier.

This sorted the 50000 random values consecutively 6 times in a row at 0.009s.

That wraps up this article for now. In the next follow-up I plan to move on to the searching algorithms, but I may also swing back to sorting to build some kind of hybridized algorithm and maybe also turn everything into a fully fledged class for re-usability.

Now adding some additional notes for myself - I may also try writing some sorting and searching algorithms for different data types.

Thank you for reading, please drop me any suggestions and recommendations you have or if you've found this article interesting drop me a comment below!

That's all for this entry into the Captain's log for now, keep checking back for more!

In this article I will start to look at methods of partitioning arrays to increase the speed of sorting the values ascending. The idea of partitioning is basically to split the sorting of the array into "partitions".

First I will start by creating a new sort procedure and name it array_dacsort; literally meaning divide and conquer sort.

//

// procedure to sort the array using partitioning and recursion

//

void array_dacsort(int a[], int lower, int upper) {

// if lower index is higher or equal to upper just return

if (lower >= upper) return;

// let's start to calculate the pivot of the partition starting with the passed upper index of the array

int pivot = a[upper];

// we will use "i" as a track index

int i = lower - 1;

// loop through our array from lower to upper indexes

for (int j = lower; j < upper; j++)

// if the value of the current index is lower than the array pivot, we should increment the track index and swap the values

if (a[j] < pivot) std::swap(a[++i], a[j]);

// swap the last upper value with the current track index + 1

std::swap(a[i+1], a[upper]);

// now we will do a recursive call to sort the 2 partitioned parts

array_dacsort(a, lower, i);

array_dacsort(a, i+2, upper);

}The procedure is fairly simple, especially as it is recursively calling itself. Aftering writing this procedure and doing some research on my implementation, it turns out this algorithm is known as quicksort. I used the code from the previous article to test the speed with the exact same conditions as the previous sort procedure and this one came in consecutively as 0.006s - a HUGE difference.

After reading about quicksort and looking at similar implementations, I read more about using an algorithm known as HeapSort which is considered better when using in embedded systems where memory usage may be limited.

After reading a few articles on heap sort and what it actually does, they all seemed to implement a variation of a re-arrangement loop, often called "heapify", so first was to write the code for this:

//

// procedure to re-arrange values obeying heap properties.

//

void array_heapify(int a[], int parent, int len) {

// upper is our current upper maximum index

int upper = parent;

// simple while loop

while (true) {

// set the left index like a binary tree, we want the left child which is parent * 2 + 1

int left = parent * 2 + 1;

// right is 1 index higher

int right = left + 1;

// self explanatory

if ((left < len) && (a[left] > a[upper])) upper = left;

if ((right < len) && (a[right] > a[upper])) upper = right;

if (upper == parent) break;

std::swap(a[parent], a[upper]);

parent = upper;

}

}To understand this, you need ideally to understand binary trees and read into heap operations. Here we consider the array as a binary tree. The left child in a binary tree is always i*2+1, where i is the index of the parent.

Now we need to start building and sorting the heap. We start from half way down the array and decrement, then call our heapify procedure at the current step index to re-arrange the values.

for (int i = (len / 2 - 1); i >= 0; i--)

array_heapify(a, i, len);Next we will actually sort the full re-arranged array using the same heapify procedure, again from upper to lower.

for (int i = len - 1; i >= 0; i--) {

// swap the root element

std::swap(a[0], a[i]);

// re-arrange using our heapify procedure

array_heapify(a, 0, i);

}The final implementation of our heap sort with some slight changes looks like this:

//

// procedure to re-arrange values obeying heap properties.

//

inline void array_heapify(int a[], int parent, int len) {

// upper is our current upper maximum index

int upper = parent;

// simple while loop

while (true) {

// set the left index like a binary tree, we want the left child which is parent * 2 + 1

// use bitwise instead of multiplication

int left = (parent << 1) + 1;

// right is 1 index higher

int right = left + 1;

// self explanatory

if ((left < len) && (a[left] > a[upper])) upper = left;

if ((right < len) && (a[right] > a[upper])) upper = right;

if (upper == parent) break;

std::swap(a[parent], a[upper]);

parent = upper;

}

}

//

// our heapsort procedure that is basically the loops calling our re-arrange

//

void array_heapsort(int a[], int len) {

// build the heap

for (int i = (len / 2 - 1); i >= 0; i--)

array_heapify(a, i, len);

// sort the heap

for (int i = len - 1; i >= 0; i--) {

// swap the root element

std::swap(a[0], a[i]);

// re-arrange using our heapify procedure

array_heapify(a, 0, i);

}

}This one took a little longer for me to wrap my head around, but so far it works! Now for speed testing with the same setup as earlier.

This sorted the 50000 random values consecutively 6 times in a row at 0.009s.

That wraps up this article for now. In the next follow-up I plan to move on to the searching algorithms, but I may also swing back to sorting to build some kind of hybridized algorithm and maybe also turn everything into a fully fledged class for re-usability.

Now adding some additional notes for myself - I may also try writing some sorting and searching algorithms for different data types.

Thank you for reading, please drop me any suggestions and recommendations you have or if you've found this article interesting drop me a comment below!

That's all for this entry into the Captain's log for now, keep checking back for more!

Search

Popular articles

Tags

Accident Adoption Albums Algorithms Altcoins Animal Rescue AnL Aquarium Arma ArmA nd Leg Array Partitioning Arrays Assembler Assembly Atanasovsko Award Bazaar Binary Search Bitcoin BMW Bohemia Bootstrap Bulgaria Bulgaria Za Mladite Burgas C C++ car Celerity Charity Chemical Records Chemical Shock Chemlight Code Competition Compiler Contest Converter Covid-19 CPP Crypto Cryptocurrency css Data Recovery Database DataTables Decode Dedicated Dedicated Server Delphi Development Dialogs Divide & Conquer DIY DnB Drum & Bass E30 Earplugs Event Exchange Eyes Fish Floating-point Flood Fog Fracture Frank Harrison Freeware Function Funny Gallery Game Game Development Game Modding Gaming Garden Gardening Gazebo GERB GitHub Glitch Hamish Harding Happy New Year Heapify HeapSort Helga HTML HTML entities Introduction Jackie JavaScript JQuery Jungle Lake Language Linker Listbox ListNBox lnbSetTooltip m52b25 MariaDB Maths Merry Christmas Mini Mining Miningpoolhub Mist Modding MPH Multiplayer Music MySql NGO OceanGate One Nature ORCA organisation OverHertz Paludarium Pandemic Pascal Paul-Henri Nargeolet Paws Furever Pergola Persistence Pets Photography Pier Plugin Programming progress bar Project PX Library Quicksort r53 Racing Replace RIP Scripting Searching Server Shahzada Dawood Simulation Simulator Smoke Snippet Social media Software Sorting Source Sourcecode SQF Statistics Stockton Rush String Strings Submarine Suleman Dawood Terrain Detail Text Titan Tool Tragedy tutorial UCS4Char UE4 unicorn Unreal Engine Victims View Distance ViewBug web Web Development Website Welcome Woodworking Ziron Zynk